2025-12-09:

Watchdog and cron

You already know I despise systemd a bit more than wayland, but the future will be in the future, and the past will still be in the past.

I have message queues and bees workers. Workers die each hour, but my lovely cron resurrects them and forces them to work.

It's just a single server and there won't be any more (but there will be fewer), so don't shame me, please.

There are a lot of workers (very few) and even more queues (a very few of few). They lived in harmony, but I did something wrong and the workers began their deadly loop and started eating memory. And they ate it all.

Using simple spells like "it worked before, which means my changes from yesterday are at fault" it was quite simple to find the broken worker. It was killed, and the rest were told to keep working while ignoring the corpse nearby.

And they worked. For an hour. And then they didn’t. Run them manually — works. Leave them alone — doesn’t.

That was weird, as cron wasn't touched, memory wasn’t leaking, but the workers didn’t want to work.

The book of life (/var/log/syslog | grep -i cron) told me that cron worked fine, but not since long ago. And then it didn’t.

It turned out that when the workers ate all the memory — watchdog killed cron. I doubt it realized that cron was the initial culprit; it was simply near the paw of death.

For the very first time in my life, watchdog killed cron! Unbelievable!

Would systemd timers survive? Would the paw of death smite them?

It's a mystery. I could run an experiment, but no. Imperative knowledge is only for people with weak faith.

#linux

#cron

#oom

#outofmemory

#memory

#memoryleak

#loopofdeath

#systemd

#systemdtimers

2025-11-28:

Someone ruined the internet (x)

We all know that email@example.com is an email address. But what does an email address mean?

It means that on a server with the address example.com there is an account named email.

We also know it’s an email address, so we know which port to poke and what protocol to choose (details in dns).

And every *nix account can be written as user1@computer1, which means that on computer1 there exists an account user1.

The symbol @ literally means at:

user-at-computer1email-at-example.com

Cose. Uniform.

And then Twitter comes along and decides that everyone will be @user2, and breaks the internet.

TWITTER SHOULD HAVE USED user2@!!!111

We lost everything. Dark times are ahead.

#deep

#blasphemy

2025-11-27:

Wordpress actions hooks and filter hooks

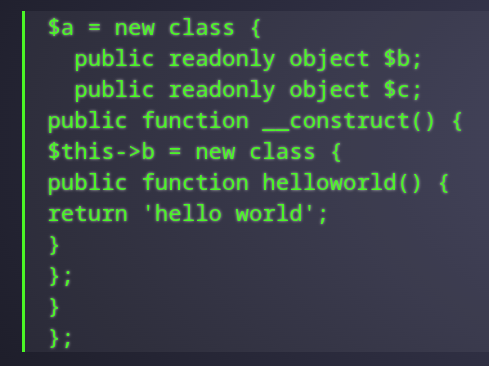

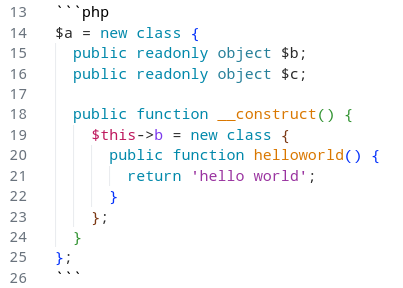

There are two types of hooks: Actions and Filters.

Actions allow you to add data or change how WordPress operates.

Filters give you the ability to change data during the execution of WordPress Core, plugins, and themes.

function add_action( $hook_name, $callback, $priority = 10, $accepted_args = 1 ) {

return add_filter( $hook_name, $callback, $priority, $accepted_args );

}

public function has_filters() {

foreach ($this->callbacks as $callbacks) {

if ($callbacks) {

return true;

}

}

return false;

}

#php

#wordpress

#why

2025-11-22:

.ssh/unknown_hosts

When ssh connects to an unknown host, it asks the user to type "yes". No one knows why we should do it, but ssh will not connect without it.

You can avoid this prompt in two ways:

# 1. Disable security check (unsafe)

$ ssh -o "StrictHostKeyChecking no" user@example.com

# 2. Automatically make remote host known

$ ssh-keyscan -t rsa example.com >> ~/.ssh/known_hosts

$ ssh user@example.com

There was no warning in the second case! And not because we disabled safety check, we made the host known to us!

Now you should understand that professional do different choices. Be professional, choose safety!

#linux

#ssh

#safety

2025-11-18:

OTP registration

What if we look on OPIE and OTPW and decide we dont want it.

And we make registration by OTP generation. Singlefactory. While you have access to key -- you have access to the service.

No need to know any password, no any use of e-mails and logins.

Cool! Dumb and cool!

#whaif

#hearmeout

#idea

#otp

2025-11-16:

Zola SSG: the story of success

Many burned candles had I burn to figure out how the static site generator zola works.

It uses .md files, and it seemed like the safest option.

Apparently it can't render ``` and for some unknown reason it wraps everything in layers of <code> and <p> even with disabled highligting.

That’s unfortunate -- to fall on the text you shouldn't touch.

#zola

#web

#ssg

#markdown

#wtf

2025-11-10:

git vs git --bare

Your git repository could exist in two instances: normal and --bare. Normal is a ~git-client, not normal is a ~git-server. Not exactly, but --bare exists to be a remote in normal repositories. And it also doesn't have files inside.

You can create on your VPS a --bare repository and via ssh synchronize it, avoiding any services like github. And everyone with ssh access can use it as a remote.

Astoundingly, when you create normal it creates a directory for itself <repo>/.git</repo>, but when --bare it uses the given path as .git.

$ git init ./git-normal/

Initialized empty Git repository in /tmp/.dotfiles/git-normal/.git/

$ git init --bare ./git-bare/

Initialized empty Git repository in /tmp/.dotfiles/git-bare/

$ ls -lah git-normal/

.git/

$ ls -lah git-bare/

hooks/

info/

objects/

refs/

HEAD

config

description

Funny joke: if you put --bare in ./.git/, it will look like ./ has a git repository, and it is there, but it is not!

fatal: this operation must be run in a work tree

#linux

#howto

#man

#git

2025-09-28:

Local decentralized DNS without DNS: Multicast DNS (mDNS)

You have two linux items (others will also work, but you'll need to google why) that you want to communicate between.

The simplest way is to use ip, but only once. Tommorow the item will get a different ip, you can't win it.

The simplest way is to add an alias into /etc/hosts

192.168.0.48 destination

And use the alias everywhere:

$ scp kitty.gif user@destination:

But the ip will change anyway. But you will update it in a single place. But you will update it.

The simplest way to fix is install local DNS server on the server, make it the main server in the network, and proxy DNS queries throught it there is no.

Wouldn’t it be a breeze to avoid editing by hand?

In 2025 year 25 years ago was invented Multicast DNS (mDNS) which via brodcast request creates a local dynamic decentralized DNS that you shouldn't care about. But only within the single network. And only single host per item.

In linux when it converts word-names into numbers, it uses service Name Service Switch. This service has /etc/nsswitch.conf where it was shown where to how to look:

> cat /etc/nsswitch.conf

# Name Service Switch configuration file.

# See nsswitch.conf(5) for details.

passwd: files systemd

group: files [SUCCESS=merge] systemd

shadow: files systemd

gshadow: files systemd

publickey: files

hosts: mymachines resolve [!UNAVAIL=return] files myhostname dns

networks: files

For "hosts" it says to check first in local containers (mymachines)

Then it go to the (systemd-resolved) dns resolver which replaced nss-dns. If DNS returns unavailable, it quits.

Then it checks /etc/hosts, local hostname, and legacy DNS.

Engaging fact: we can add another service to the list. What if we will add the Multicast DNS (mDNS)?

Oviously, for literaly everyone we will have both and server, and client parts. We will do belows everywhere for harmony.

https://wiki.archlinux.org/title/Avahi

Install package libnss-mdns in deps of which is avahi (we will add into NSS hosts as service):

# pacman -Sy nss-mdns

# emerge -av sys-auth/nss-mdns

# sudo apt-get install libnss-mdns avahi-daemon avahi-utils

Next add to /etc/nsswitch.conf following string mdns_minimal [NOTFOUND=return]:

# hosts: mymachines resolve [!UNAVAIL=return] files myhostname dns

hosts: mymachines mdns_minimal [NOTFOUND=return] resolve [!UNAVAIL=return] files myhostname dns

# hosts: mymachines mdns_minimal resolve [!UNAVAIL=return] files myhostname dns

[NOTFOUND=return] tells not to look for domains*.local anymore, this will break entries in /etc/hosts so you can remove it to keep everything work as before, but better.

You can also catch domains beyond *.local, but we don't need it. We'll use /etc/hosts for it.

Set local domain in the file /etc/avahi/avahi-daemon.conf and reload and check:

$ cat /etc/avahi/avahi-daemon.conf | grep host-name

host-name=source

$ systemctl enable avahi-daemon.service # add to autorun

$ systemctl restart avahi-daemon.service # run

$ avahi-browse --all --verbose --resolve --terminate

$ avahi-resolve-host-name source.local

source.local 192.168.100.101

$ ping source.local

PING source.local 56 data bytes

64 bytes from source.local : icmp_seq=1 ttl=64 time=0.031 ms

64 bytes from source.local : icmp_seq=2 ttl=64 time=0.067 ms

64 bytes from source.local : icmp_seq=3 ttl=64 time=0.056 ms

^C

The problem is that we can have only one domain per item. If you want to have multiple local domains like:

git.local

server.local

You can easily do this via /etc/avahi/hosts, but there you also have to hardcode the ip.

The simplest way to fix it would be to run multiple instances of avahi-publish & per each domain each time calcuting dynamic local ip there is no simple way live with the single domain on it's all better anyway.

$ cp /usr/share/doc/avahi/sftp-ssh.service /etc/avahi/services/

$ cp /usr/share/doc/avahi/ssh.service /etc/avahi/services/

$ systemctl restart avahi-daemon.service

Quickly repeat on the second item, then check from both they aren't alone. Configure an ssh connection by ip between two items. Then in ssh just replace ip to the local domain:

$ ssh my_user@destination.local -i ~/.ssh/my_key

When eveything works we add to ~/.ssh/config magic words:

Host destination

HostName destination.local

IdentityFile ~/.ssh/my_key

User my_user

An alias for ssh was magically created and fully configured:

$ ssh destination

That's all, we can now exchange files and remotely execute commands

$ cd /tmp/

$ date > temp_file

$ echo "source" >> temp_file

$ scp temp_file destination:

$ ssh destination 'echo destination >> ~/temp_file'

$ ssh destination 'cat ~/temp_file'

Uru ru ru, we can now exchange files and remotely execute commands. And now we can use domains not tied to ip!

There is no multidomaning on the single item, but I can live with it.

You can apply the same to NFS and rsync and git!!!

And also mDNS the base of IoT and other home assistant networks!

#linux

#multicastdns

#mdns

#dns